How Eido Works

From data to pixels — a tour of the rendering pipeline

The Big Picture

A scene in Eido is a plain Clojure map. It goes through a pipeline of pure data transformations — validation, compilation, lowering, rendering — and comes out as pixels. No GPU, no OpenGL, no mutable state. Just functions that turn data into data, until the last step paints it onto a BufferedImage using Java2D.

Each step is a pure function. The scene map goes in one end, a BufferedImage comes out the other. Every intermediate result is inspectable data — you can print it, diff it, serialize it. The rendering backend (currently Java2D) is isolated behind the concrete ops layer, so a future WebGL or Skia backend would only need to implement the final step.

Step 1: The Scene Map

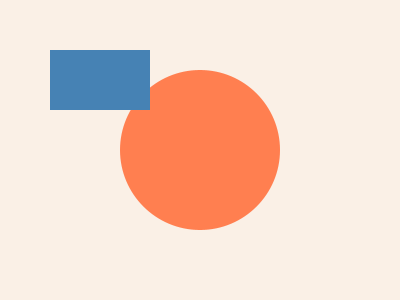

Everything starts here. A scene is a map with three keys — the canvas size, a background color, and a vector of nodes. Each node is itself a map describing a shape, a group, or a generator:

{:image/size [400 300]

:image/background [:color/name "linen"]

:image/nodes

[{:node/type :shape/circle

:circle/center [200 150]

:circle/radius 80

:style/fill [:color/name "coral"]}

{:node/type :shape/rect

:rect/xy [50 50]

:rect/size [100 60]

:style/fill [:color/name "steelblue"]}]}

That's the entire input. No classes, no builder patterns, no inheritance. Nodes can be shapes (:shape/circle, :shape/rect, :shape/path), groups (:group with :group/children), or generators (:flow-field, :contour, :scatter) that produce shapes during compilation.

Step 2: Validation

Before any work happens, the scene is validated against a comprehensive spec. Color formats, node types, transform syntax, path commands — all checked. If something's wrong, you get a clear error pointing to exactly where:

For example, if you pass a negative radius, a made-up color format, and a number where a vector was expected:

;; This scene has three deliberate mistakes:

;; {:image/nodes [{:circle/radius -5}

;; {:style/fill [:color/invalid ...]}

;; {:rect/size 100}]}

;;

;; Eido catches all of them before rendering:

Invalid scene — 3 validation errors:

1. at [:image/nodes 0 :circle/radius]: positive number, got: -5

2. at [:image/nodes 1 :style/fill 0]: known color format, got: :color/invalid

3. at [:image/nodes 1 :rect/size]: vector of [w h], got: 100Each error tells you the path into the data structure, what was expected, and what was found. Mistakes are caught at the boundary — before they become mysterious rendering glitches deep in the pipeline.

Validation uses clojure.spec.alpha with multimethod dispatch on :node/type. It's optional — bind eido/*validate* to false for faster REPL iteration once your scene structure is stable.

Step 3: Semantic IR

The scene map is compiled into a semantic intermediate representation — a structured container that preserves your intent. Shapes become draw items with separate slots for geometry, fill, stroke, effects, and transforms. Generators and procedural fills are kept as-is — they haven't been expanded yet.

;; A circle node becomes a draw item:

{:item/geometry {:geometry/type :circle

:geometry/cx 200 :geometry/cy 150

:geometry/r 80}

:item/fill {:r 255 :g 127 :b 80 :a 1.0}

:item/stroke nil

:item/opacity 1.0

:item/transforms []}

;; A flow-field generator is preserved, not yet expanded:

{:item/generator {:generator/type :flow-field

:flow-field/bounds [0 0 400 300]

:flow-field/opts {:density 20 :steps 30 :seed 42}}

:item/fill {:r 0 :g 0 :b 0 :a 1.0}}Why two layers? Because generators like flow fields produce hundreds of path nodes when expanded. Keeping them as compact descriptions in the semantic IR means you can inspect, serialize, and diff scenes efficiently. The expansion happens in the next step — lowering.

The IR container wraps everything in a rendering pass structure:

{:ir/version 1

:ir/size [400 300]

:ir/background {:r 250 :g 240 :b 230 :a 1.0}

:ir/passes [{:pass/id :draw-main

:pass/type :draw-geometry

:pass/items [draw-item-1 draw-item-2 ...]}]

:ir/outputs {:default :framebuffer}}Step 4: Lowering

This is where the magic happens. Lowering walks the semantic IR and expands everything into concrete drawing operations. Generators become shapes. Procedural fills become clipped lines or dots. Effects become offscreen buffer operations.

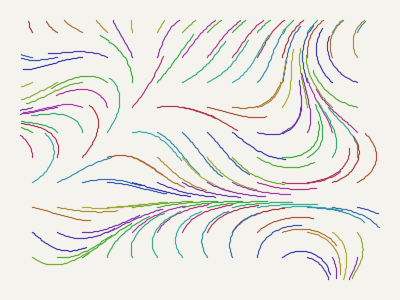

Generator expansion

A flow-field description becomes hundreds of actual path nodes:

;; Before lowering (semantic IR):

{:generator/type :flow-field

:flow-field/bounds [0 0 400 300]

:flow-field/opts {:density 25 :steps 30 :seed 42}}

;; After lowering (concrete ops):

[PathOp{:commands [[:move-to [23 45]] [:line-to [25 47]] ...]}

PathOp{:commands [[:move-to [67 12]] [:line-to [69 14]] ...]}

PathOp{:commands [[:move-to [112 89]] [:line-to [114 91]] ...]}

... ;; ~80 path ops from one generator

]

Each generator type calls its corresponding eido.gen.* module — flow fields call eido.gen.flow/flow-field, scatter calls eido.gen.scatter/scatter->nodes, and so on. The lowering step bridges the gap between the artist's intent and the renderer's needs.

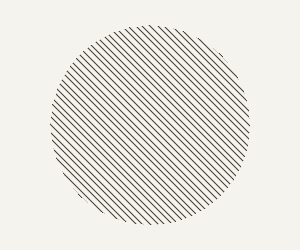

Fill expansion

Procedural fills like hatching and stippling are expanded into actual geometry, clipped to the shape they fill:

;; Before: a circle with a hatch fill (semantic IR)

{:geometry/type :circle :geometry/r 80

:fill {:fill/type :hatch

:hatch/angle 45 :hatch/spacing 4}}

;; After: concrete line ops clipped to the circle

[PathOp{:commands [...] :clip circle-area}

PathOp{:commands [...] :clip circle-area}

...]

Effect wrapping

Effects like shadows and glows become offscreen buffer operations — the shape is painted to a temporary image, the effect is applied, then composited onto the main canvas:

;; Shadow effect → duplicate shape + blur + offset

BufferOp{:composite :src-over

:filter {:type :blur :radius 8}

:transforms [[:translate 4 4]]

:ops [CircleOp{...shadow-color...}]}

CircleOp{...original-shape...}View lowering on GitHub · View generator expansion on GitHub · View fill expansion on GitHub

Step 5: Concrete Ops

After lowering, the entire scene is a flat vector of records — one per visible shape. No more nesting, no more generators, no more deferred computation. Just a sequence of drawing instructions:

[CircleOp {:cx 200 :cy 150 :r 80

:fill {:r 255 :g 127 :b 80 :a 1.0}

:stroke-color nil :opacity 1.0

:transforms [] :clip nil}

RectOp {:x 50 :y 50 :w 100 :h 60

:fill {:r 70 :g 130 :b 180 :a 1.0}

:stroke-color nil :opacity 1.0

:transforms [] :clip nil}]Each op is a Clojure record — a compiled JVM class with O(1) field access, but still implementing IPersistentMap so you can use (:cx op) like a regular map. The op types are:

RectOp— rectangles (with optional corner radius)CircleOp— circlesEllipseOp— ellipsesArcOp— arcs and pie slicesLineOp— line segmentsPathOp— arbitrary paths (bezier curves, polygons, freeform)BufferOp— compositing groups (contains child ops, rendered to offscreen buffer)

This flat structure is what the renderer consumes. It's also what the SVG exporter reads — both backends work from the same concrete ops, just painting to different targets.

Step 6: Rendering

The renderer walks the op vector top to bottom, painting each shape onto a BufferedImage using Java2D's Graphics2D API. For each op:

;; Pseudocode for the rendering loop:

(for-each op in ops

1. Save Graphics2D state

2. Apply transforms (translate, rotate, scale)

3. Set clip region (if present)

4. Set opacity via AlphaComposite

5. Convert geometry to Java2D Shape

6. Fill the shape (solid, gradient, or texture)

7. Stroke the shape (if stroke specified)

8. Restore Graphics2D state)BufferOp groups get special handling — their children are rendered to a temporary offscreen image, post-processing filters (blur, grain, posterize) are applied, then the result is composited onto the main canvas using the specified blend mode (:src-over, :multiply, :screen, etc.).

Java2D handles antialiasing, sub-pixel positioning, and bezier curve rasterization. Eido doesn't implement a software rasterizer — it leans on the JVM's mature 2D graphics stack.

Step 7: Output

The BufferedImage is the universal intermediate. Every raster output format reads from it:

- PNG — via

ImageIO.write(with optional DPI metadata for print) - JPEG — ARGB composited onto white, then written with quality setting

- GIF — single frame via ImageIO, animated via a custom GIF encoder that writes frame delays and loop flags

- BMP — via ImageIO (RGB)

SVG takes a completely different path — it reads the concrete ops directly and emits XML elements (<rect>, <circle>, <path>) instead of painting pixels. Same ops, different output.

Animations are just sequences of scenes. Eido renders each frame independently, then stitches them together:

;; 60 scenes → 60 BufferedImages → animated GIF

(eido/render

(anim/frames 60

(fn [t] {:image/size [400 300] ...}))

{:output "animation.gif" :fps 30})Design Decisions

Why two IR layers?

The semantic IR keeps the artist's intent intact — a flow field is one compact description, not 200 path nodes. This makes scenes diffable, serializable, and inspectable. The concrete IR is optimized for rendering — flat, no generators, every shape fully resolved. Separating these concerns means you can work with scenes at the right level of abstraction for each task.

Why CPU rendering?

Java2D runs everywhere the JVM runs — no GPU drivers, no platform-specific shader compilation, no WebGL context limits. The output is deterministic (same input → same pixels, always), which matters for reproducible generative art. For the image sizes generative artists typically work with (up to ~4K), CPU rendering is fast enough. A GPU backend could be added later by implementing the concrete ops → pixels step without changing anything else.

Why records for concrete ops?

defrecord gives O(1) field access (compiled JVM class) while still acting as an immutable map. The renderer touches :cx, :cy, :fill etc. on every op — fast field access matters in the inner loop.

Why expand generators during lowering?

Generators depend on geometry for their output (a flow field needs to know its bounds, a hatch fill needs the shape it's filling). By the time lowering runs, geometry is resolved. Expanding earlier would require passing incomplete information; expanding later would force the renderer to understand generators. Lowering is the natural boundary.

Data all the way down

Every intermediate result in the pipeline is printable, serializable Clojure data. No opaque objects, no hidden state. You can prn the semantic IR, prn the concrete ops, save them to a file, load them back, or write tests against them. Hell, even store them in a Datomic database if you want. This is the core design principle — the image is a value.

Source Map

Key namespaces and what they do:

| Namespace | Role | Source |

|---|---|---|

eido.core | Entry point — render, file I/O, format detection | core.clj |

eido.validate | Scene validation with detailed error messages | validate.clj |

eido.spec | Spec definitions for nodes, colors, transforms | spec.clj |

eido.engine.compile | Scene → Semantic IR, concrete op records | compile.clj |

eido.ir.lower | Semantic IR → Concrete ops (generator expansion, fill resolution) | lower.clj |

eido.ir.generator | Expands flow-field, scatter, voronoi, contour, etc. | generator.clj |

eido.ir.fill | Expands hatch and stipple fills into geometry | fill.clj |

eido.ir.effect | Wraps effects (shadow, glow, blur) as buffer ops | effect.clj |

eido.engine.render | Concrete ops → BufferedImage via Java2D | render.clj |

eido.engine.svg | Concrete ops → SVG XML string | svg.clj |

eido.engine.gif | Animated GIF encoder | gif.clj |

eido.gen.* | Generative modules (noise, flow, circle packing, boids, etc.) | gen/ |

eido.color | Color parsing, conversion, and manipulation | color.clj |

eido.scene | Layout helpers and node constructors | scene.clj |